News from ETSON and its members*

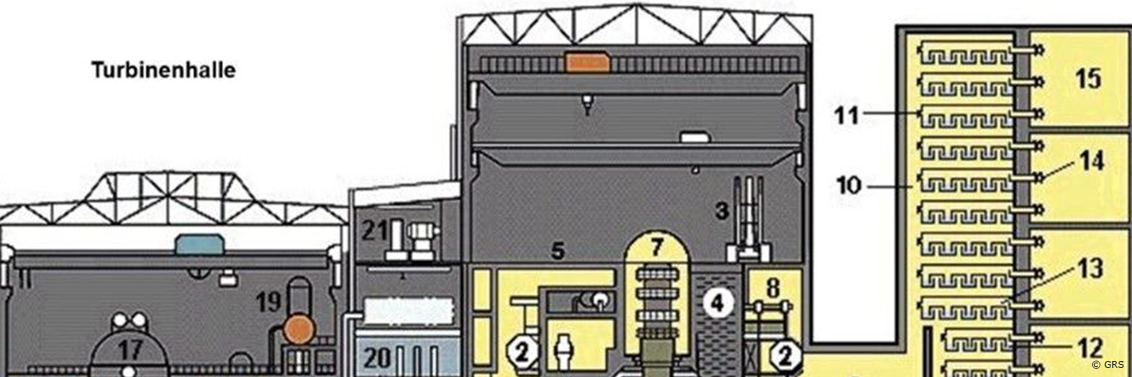

More than 30 pressurised water reactors of the Russian or Soviet VVER (water-water-energy reactor) type are currently in operation in Eastern and Central Europe, and several new plants are under construction. Mochovce-3, for example, started commercial operation in Slovakia in October 2023. Not least the war in Ukraine and the fact that the Zaporizhzhya nuclear power plant (NPP) became the theatre of war have shown that technical knowledge about this type of plant is still needed in Germany too, e.g. to be able to assess any risks. Experts at GRS are therefore involved in numerous research projects on VVER reactors, including international ones.

As part of EU research and innovation framework program Horizon Europe/EURATOM, the European research partnership PIANOFORTE aims to improve knowledge and promote innovation in the field of radiation protection on behalf of better protection of the public, patients, workers and the environment in all scenarios of exposure to ionizing radiation.

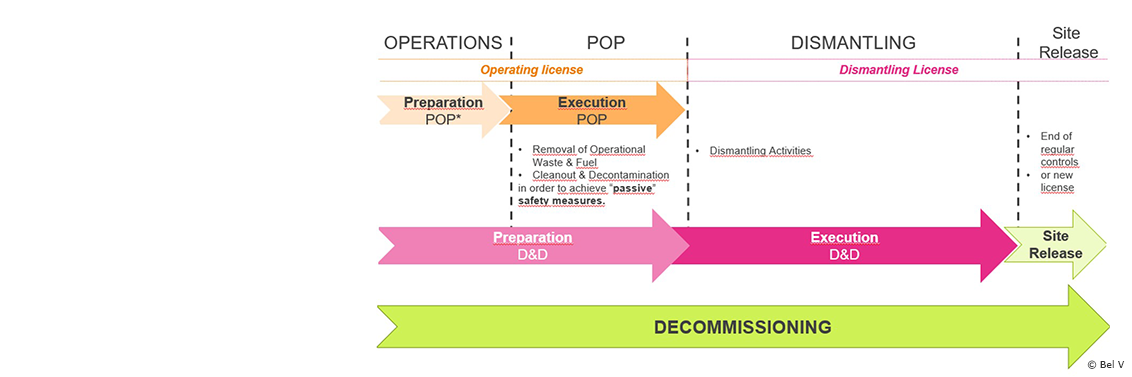

According to the law for nuclear phase out in Belgium, the third unit of the Doel NPP (referred to as “Doel 3”) was permanently shut down in September 2022 after 40 years of operation. Doel 3 entered in so-called Post Operational Phase (POP, see Figure 1), during which the licensee prepares notably its safe dismantling.

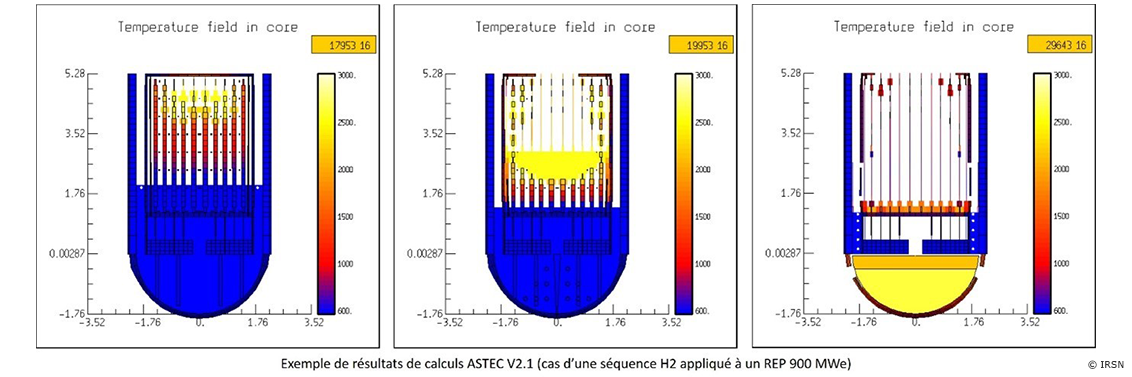

The Accident Source Term Evaluation Code (ASTEC) software system, developed by IRSN, simulates all phenomena occurring during a core meltdown accident in a water-cooled reactor, from the initiating event to the discharge of radioactive substances outside the containment. Version V3.1 of the ASTEC software, applicable to other nuclear facilities, is now available.

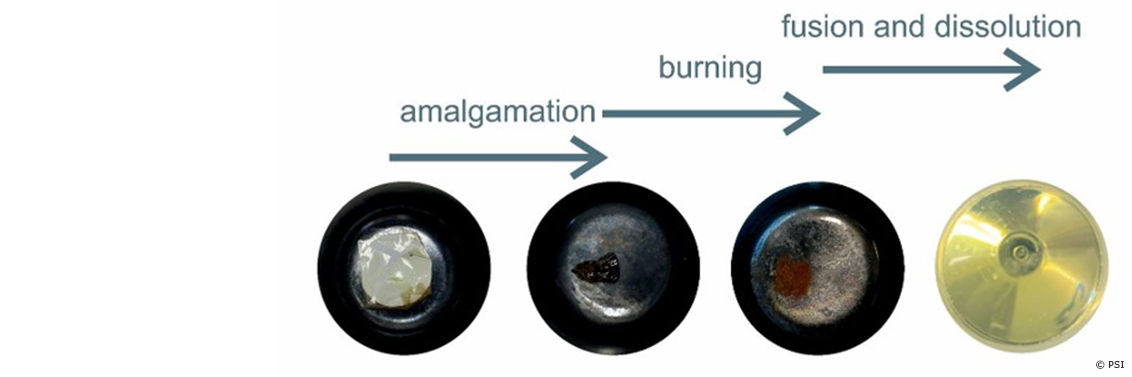

Chalk River Unidentified Deposits (CRUD) are dissolved and suspended solids, product of the corrosion of structural elements in water circuits of nuclear reactors.

The chemical composition of CRUD is variable as it depends on the composition of the reactor’s structural material, as well as the types of refueling cycles. Recent internal investigations have found unexpected but significant Si-amount in CRUD. The chemical composition of CRUD holds key information for an improved understanding of CRUD formation and possible impact in fuel reliability and contamination prevention.

The standard analytical methods available in the hot laboratory did not allow an easy quantitative determination of the Si-amount in CRUD. A new innovative procedure has been developed and tested with synthetic CRUD name Syntcrud.

The adapted flex-fusion digestion method presented here is able to provide reliable concentrations of several elements within CRUD, including Si, which was not possible in methods used previously for ICPMS measurement.

An ETSON Conference was held on 11 and 12 October 2023 in Brussels, Belgium. Bel V hosted this 2023 edition.

There were about 5O participants from different European TSOs, as well as representation on behalf of the Japanese TSO NRA.

In 2023, four teams participated in the final phase. A Science slam was organized At BEL V in Brussels by the "Junior Staff Program" on October 11th.

Jožef Stefan Institute, as one of the TSO organizations in Slovenia, is currently involved in support of the Slovenian Nuclear Safety Administration activities during the unplanned Krško Nuclear Power plant outage.

This autumn marked a significant event in the field of nuclear energy, not only for Ukraine but also globally. Ukraine was the first country to load Westinghouse nuclear fuel into a VVER-440 reactor at one of its NPPs. Until now, Ukrainian reactors of this type used only russian fuel. Thus, Ukraine has become the first nation to achieve independence from russia in the supply of fresh nuclear fuel for operating VVER-1000 and VVER-440 Soviet-era reactors.

Since the early 2000s, there has been a significant increase in the number of innovative reactor designs worldwide. These designs are based on unique solutions and approaches not yet seen in operating nuclear power plants nor previously used in the nuclear energy sector.

Pagination

Stay informed - subscribe to our newsletter.

Copyright · All rights reserved